Join Transform 2021 this July 12-16. Register for the AI occasion of the year.

Facebook says it has created an AI approach that enables machine finding out models to only retain particular data though forgetting the rest. The enterprise claims that the operation, Expire-Span, can predict data most relevant to a activity at hand, enabling AI systems to method data at bigger scales.

AI models memorize data with no distinction — in contrast to human memory. Mimicking the capability to overlook (or not) at the software program level is difficult, but a worthwhile endeavor in machine finding out. Intuitively, if a technique can keep in mind 5 points, these points really should ideally be truly critical. But state-of-the-art model architectures focus on components of information selectively, major them to struggle with huge quantities of data like books or videos and incurring higher computing charges.

This can contribute to other issues like catastrophic finding out or catastrophic interference, a phenomenon exactly where AI systems fail to recall what they’ve discovered from a education dataset. The outcome is that the systems have to be frequently reminded of the know-how they’ve gained or threat becoming “stuck” with their most current “memories.”

Several proposed options to the issue focus on compression. Historical data is compressed into smaller sized chunks, letting the model extend additional into the previous. The drawback, having said that, is “blurry” versions of memory that can have an effect on the accuracy of the model’s predictions.

Facebook’s option is Expire-Span, which progressively forgets irrelevant data. Expire_span functions by initial predicting which data is most critical for a activity at hand, based on context. It then assigns every piece of data with an expiration date such that when the date passes, the data is deleted from the technique.

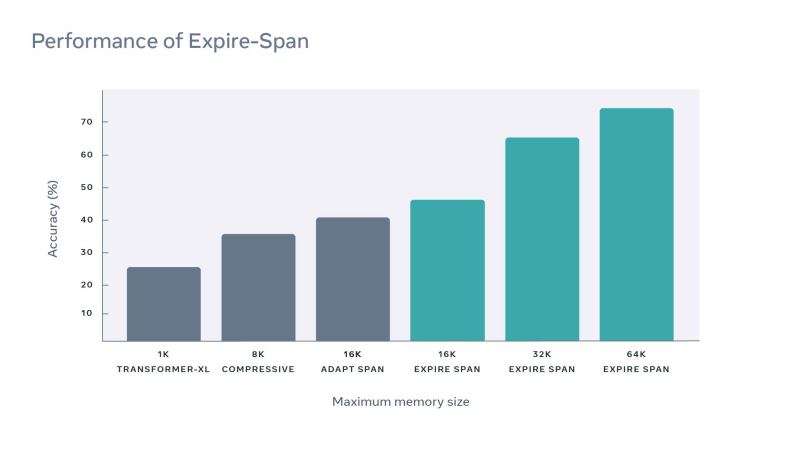

Facebook says that Expire-Span achieves major final results on a benchmark for character-level language modeling and improves efficiency across extended-context workloads in language modeling, reinforcement finding out, object collision, and algorithmic tasks.

The value of forgetting

It’s believed that with no forgetting, humans would have generally no memory at all. If we remembered all the things, we’d most likely be inefficient simply because our brains would be swamped with superfluous memories.

Research suggests that one type of forgetting, intrinsic forgetting, includes a particular subset of cells in the brain that degrade physical traces of traces of memories named engrams. The cells reverse the structural adjustments that produced the memory engram, which is preserved via a consolidation method.

New memories are formed via neurogenesis, which can complicate the challenge of retrieving prior memories. It’s theorized that neurogenesis damages the older engrams or tends to make it tougher to isolate the old memories from newer ones.

Expire-Span attempts to induce intrinsic forgetting in AI and capture the neurogenesis method in software program type.

Expire-Span

Normally, AI systems tasked with, for instance, discovering a yellow door in a hallway may perhaps memorize data like the colour of other doors, the length of the hallway, and the texture of the floor. With Expire-Gan, the model can overlook unnecessary data processed on the way to the door and keep in mind only bits necessary to the activity, like the colour of the sought-immediately after door.

To calculate the expiration dates of words, pictures, video frames, and other data, Expire-Span determines how extended the data is preserved as a memory every time a new piece of information is presented. This gradual decay is crucial to retaining critical data with no blurring it, Facebook says. Expire-Span basically tends to make predictions based on context discovered from information and influenced by its surrounding memories.

For instance, if an AI technique is education to carry out a word prediction activity, it is achievable with Expire-Span to teach the technique to keep in mind uncommon words like names but overlook filler words like “the,” “and,” and “of.” By searching at earlier, relevant content, Expire-Span predicts if one thing can be forgotten or not.

Facebook says that Expire-Span can scale to tens of thousands of pieces of data and has the capability to retain significantly less than a thousand bits of it. As a next step, the program is to investigate how the underlying approaches could be used to incorporate unique varieties of memories into AI systems.

“While this is currently research, we could see the Expire-Span method used in future real-world applications that might benefit from AI that forgets nonessential information,” Facebook wrote in a weblog post. “Theoretically, one day, Expire-Span could empower people to more easily retain information they find most important for these types of long-range tasks and memories.”