Elevate your enterprise information technologies and tactic at Transform 2021.

Facebook today introduced TextStyleBrush, an AI analysis project that can copy the style of text in a photo from just a single word. The corporation claims that TextStyleBrush, which can edit and replace arbitrary text in photos, is the initially “unsupervised” method of its type that can recognize each typefaces and handwriting.

AI-generated photos have been advancing at a breakneck pace, and they have clear company applications, like photorealistic translation of languages in augmented reality (AR). (The AR industry was anticipated to be worth $18.8 billion by the finish of 2020, according to Statista.) But creating a method that is versatile adequate to fully grasp the nuances of text and handwriting is a tough challenge since it suggests comprehending types for not just typography and calligraphy but for transformations like rotations, curved text, deformations, background clutter, and image noise.

TextStyleBrush functions comparable to the way style brush tools work in word processors but for text aesthetics in photos, according to Facebook. Unlike preceding approaches, which define distinct parameters such as typeface or target style supervision, it requires a more holistic coaching method and disentangles the content of a text image from all elements of its look.

Unsupervised finding out

The “unsupervised” component of the method refers to unsupervised finding out, the method by which the method was subjected to “unknown” information that had no previously defined categories or labels. TextStyleBrush had to teach itself to classify information, processing the unlabeled information to understand from its inherent structure.

As Facebook notes, systems like TextStyleBrusht generally involve coaching with annotated information that teach the method to classify person pixels as either “foreground” or “background” objects. But it is challenging to apply this to photos captured in the actual world. Handwriting can be one pixel in width or much less, and collecting higher-high quality coaching information demands labeling the foregrounds and backgrounds.

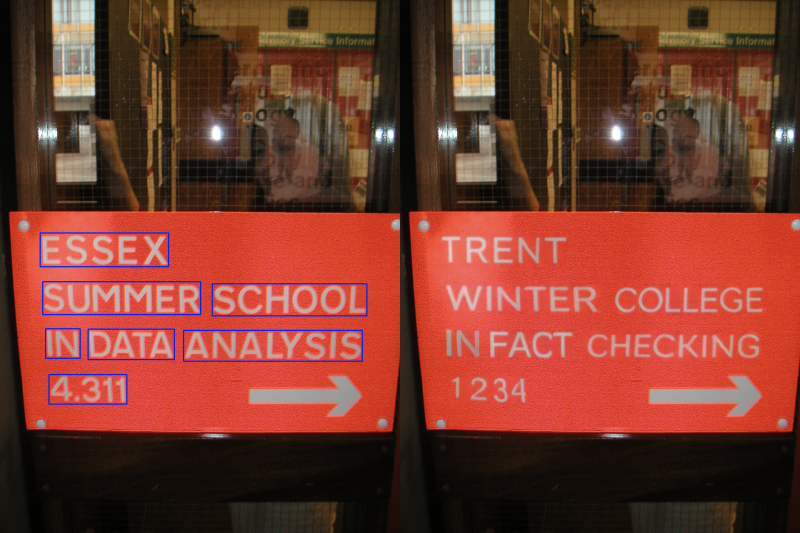

By contrast, provided a detected “text box” containing a supply style, TextStyleBrush renders new content in the style of the supply text applying a single sample. While it sometimes struggles with text written in metallic objects and characters in distinct colors, Facebook says TextStyleBrush proves it is achievable to create systems that can understand to transfer text aesthetics with more flexibility than was achievable ahead of.

“We hope this work will continue to lower barriers to photorealistic translation [and] creative self-expression,” Facebook stated in a weblog post. “While this technology is research, it can power a variety of useful applications in the future, like translating text in images to different languages, creating personalized messaging and captions, and maybe one day facilitating real-world translation of street signs using AR.”

The capabilities, procedures, and final results of the work on TextStyleBrush are accessible on Facebook’s developer portal. The corporation says it plans to submit it to a peer-reviewed journal in the future.