On the heels of a pc vision program that accomplished state-of-the-art accuracy with minimal supervision, Facebook today announced a project known as Learning from Videos that is created to automatically understand audio, textual, and visual representations from publicly out there Facebook videos. By finding out from videos spanning almost just about every nation and hundreds of languages, Facebook says the project will not only assistance it to increase its core AI systems but allow completely new experiences. Already, Learning from Videos, which started in 2020, has led to enhanced suggestions in Instagram Reels, according to Facebook.

Continuously finding out from the planet is one of the hallmarks of human intelligence. Just as people today swiftly understand to recognize areas, issues, and other people today, AI systems could be smarter and more valuable if they managed to mimic the way humans understand. As opposed to relying on the labeled datasets used to train a lot of algorithms today, Facebook, Google, and other individuals are searching toward self-supervised approaches that need couple of or no annotations.

For instance, Facebook says it is making use of Generalized Data Transformations (GDT), a self-supervised program that learns the relationships involving sounds and pictures, to recommend Instagram Reel clips relevant to not too long ago watched videos although filtering out close to-duplicates. Consisting of a series of models educated across dozens of GPUs on a dataset of millions of Reels and videos from Instagram, GDT can understand that a image of an audience clapping possibly goes with the sound of applause or that a video of a plane taking off probably goes with a loud roar. Moreover, the program can surface suggestions based on videos that sound alike or look alike, respectively, by leveraging audio as a signal.

When asked which Facebook and Instagram customers have been subjected to getting their content used to train systems like GDT and whether or not these customers have been informed the content was becoming employed in this way, a Facebook spokesperson told VentureBeat that the business informs account holders in its information policy that Facebook “uses the information we have to support research and innovation.” In education other pc vision systems such as SEER, a self-supervised AI model that Facebook detailed final week, OneZero notes that the business has purposely excluded user pictures from the European Union, probably since of GDPR.

Learning from Videos also encompasses Facebook’s work on wav2vec 2., an enhanced machine finding out framework for self-supervised speech recognition. The business says that when applied to millions of hours of unlabeled videos and one hundred hours of labeled information, wave2vec 2. lowered the relative word error price by 20% compared with supervised-only baselines. As a next step, Facebook says it is working to scale wav2vec 2. with millions of more hours of speech from 25 languages to cut down labeling, bolster the efficiency of low-and medium-resource models, and increase other speech and audio tasks.

In a connected work, to make it much easier to search across videos, Facebook says it is making use of a program known as the Audio Visual Textual (AVT) model that aggregates and compares sound and visual info from videos as properly as titles, captions, and descriptions. Given a command like “Show me every time we sang to Grandma,” the AVT model can discover its place and highlight the nearest timestamps in the video. Facebook says it is working to apply the model to millions of videos ahead of it starts testing it across its platform. It’s also adding speech recognition as one of the inputs to the AVT model, which will permit the program to respond to phrases like “Show me the news show that was talking about Yosemite.”

TimeSformer

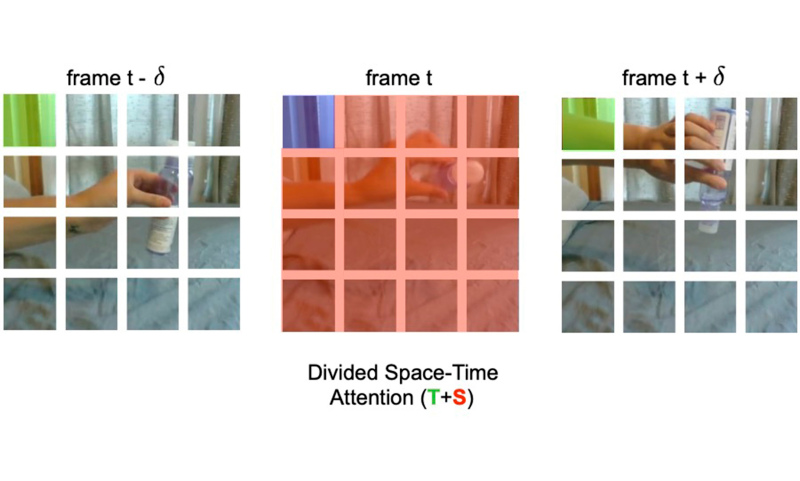

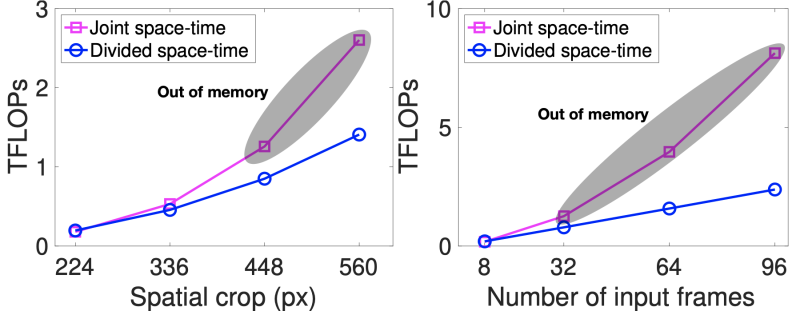

The Learning from Videos project also birthed TimeSformer, a Facebook-created framework for video understanding that is based purely on the Transformer architecture. Transformers employ a trainable consideration mechanism that specifies the dependencies involving components of each and every input sequence — for instance, amino acids inside a protein. It’s this that enables them to reach state-of-the-art outcomes in locations of machine finding out like all-natural language processing, neural machine translation, document generation and summarization, and image and music generation.

Facebook claims that TimeSformer, brief for Time-Space Transformer, attains the very best reported numbers on a variety of action recognition benchmarks. It also requires roughly one-third the time to train than comparable models. And it calls for significantly less than one-tenth the quantity of compute for inference and can understand from video clips up to 102 seconds in length, significantly longer than most video-analyzing AI models. Facebook AI analysis scientist Lorenzo Torresani told VentureBeat that TimeSformer can be educated in 14 hours with 32 GPUs.

“Since TimeSformer specifically enables analysis of much longer videos, there’s also the opportunity for interesting future applications such as episodic memory retrieval — ability to detect particular objects of interest that were seen by an agent in the past — and classifying multi-step activities in real time like recognizing a recipe when someone is cooking with their AR glasses on,” Torresani mentioned. “Those are just a few examples of where we see this technology going in the future.”

It’s Facebook’s assertion that systems like TimeSformer, GDT, wav2vec 2., and AVT will advance analysis to teach machines to recognize extended-kind actions in videos, an critical step for AI applications geared toward human understanding. The business also expects they’ll kind the foundation of applications that can comprehend what’s taking place in videos on a more granular level.

“[All] these models will be broadly applicable, but most are research for now. In the future, when applied in production, we believe they could do things like caption talks, speeches, and instructional videos; understand product mentions in videos; and search and classification of archives of recordings,” Geoffrey Zweig, director at Facebook AI, told VentureBeat. “We are just starting to scratch the surface of self-supervised learning. There’s lots to do to build upon the models that we use, and we want to do so with speed and at scale for broad applicability.”

Facebook chose not to respond straight to VentureBeat’s query about how any bias in Learning from Videos models may possibly be mitigated, alternatively saying: “In general, we have a cross-functional, multidisciplinary team dedicated to studying and advancing responsible AI and algorithmic fairness, and we’re committed to working toward the right approaches. We take this issue seriously, and have processes in place to ensure that we’re thinking carefully about the data that we use to train our models.”

Also Read: The DeanBeat: How Roblox overshadowed Microsoft’s Bethesda occasion this week

Research has shown that state-of-the-art image-classifying AI models educated on ImageNet, a well known (but problematic) dataset containing pictures scraped from the world-wide-web, automatically understand humanlike biases about race, gender, weight, and more. Countless research have demonstrated that facial recognition is susceptible to bias. It’s even been shown that prejudicescan creep into the AI tools used to build art, potentially contributing to false perceptions about social, cultural, and political elements of the previous and hindering awareness about critical historical events.

Facebook chief AI scientist Yann LeCun not too long ago admitted to Fortune that completely self-supervised pc vision systems can choose up the biases, like racial and gender stereotypes, inherent in the information. In acknowledgment of the dilemma, a year ago Facebook set up new teams to look for racial bias in the algorithms that drive its social network as properly Instagram. But a bombshell report in MIT Tech Review this week revealed that at least some of Facebook’s internal efforts to mitigate bias have been coopeted to safeguard development or in anticipation of regulation. The report additional alleges that one division’s work, Responsible AI, became basically irrelevant to fixing the bigger troubles of misinformation, extremism, and political polarization.