Elevate your enterprise information technologies and tactic at Transform 2021.

Last week, Google Research held an on the web workshop on the conceptual understanding of deep finding out. The workshop, which featured presentations by award-winning laptop or computer scientists and neuroscientists, discussed how new findings in deep finding out and neuroscience can assistance make superior artificial intelligence systems.

While all the presentations and discussions have been worth watching (and I could possibly revisit them once again in the coming weeks), one in specific stood out for me: A speak on word representations in the brain by Christos Papadimitriou, professor of laptop or computer science at Columbia University.

In his presentation, Papadimitriou, a recipient of the Gödel Prize and Knuth Prize, discussed how our increasing understanding of data-processing mechanisms in the brain could possibly assistance make algorithms that are more robust in understanding and engaging in conversations. Papadimitriou presented a uncomplicated and effective model that explains how distinct locations of the brain inter-communicate to resolve cognitive issues.

“What is happening now is perhaps one of the world’s greatest wonders,” Papadimitriou stated, referring to how he was communicating with the audience. The brain translates structured understanding into airwaves that are transferred across distinct mediums and attain the ears of the listener, exactly where they are once again processed and transformed into structured understanding by the brain.

“There’s little doubt that all of this happens with spikes, neurons, and synapses. But how? This is a huge question,” Papadimitriou stated. “I believe that we are going to have a much better idea of the details of how this happens over the next decade.”

Assemblies of neurons in the brain

The cognitive and neuroscience communities are attempting to make sense of how neural activity in the brain translates to language, mathematics, logic, reasoning, arranging, and other functions. If scientists succeed at formulating the workings of the brain in terms of mathematical models, then they will open a new door to creating artificial intelligence systems that can emulate the human thoughts.

A lot of research focus on activities at the level of single neurons. Until a handful of decades ago, scientists believed that single neurons corresponded to single thoughts. The most well-liked instance is the “grandmother cell” theory, which claims there’s a single neuron in the brain that spikes just about every time you see your grandmother. More current discoveries have refuted this claim and have established that substantial groups of neurons are linked with every idea, and there could possibly be overlaps involving neurons that hyperlink to distinct ideas.

These groups of brain cells are known as “assemblies,” which Papadimitriou describes as “a highly connected, stable set of neurons which represent something: a word, an idea, an object, etc.”

Award-winning neuroscientist György Buzsáki describes assemblies as “the alphabet of the brain.”

A mathematical model of the brain

To superior fully grasp the function of assemblies, Papadimitriou proposes a mathematical model of the brain known as “interacting recurrent nets.” Under this model, the brain is divided into a finite quantity of locations, every of which consists of many million neurons. There is recursion inside every region, which suggests the neurons interact with every other. And every of these locations has connections to many other locations. These inter-region connections can be excited or inhibited.

This model delivers randomness, plasticity, and inhibition. Randomness suggests the neurons in every brain region are randomly connected. Also, distinct locations have random connections involving them. Plasticity enables the connections involving the neurons and locations to adjust via encounter and instruction. And inhibition suggests that at any moment, a restricted quantity of neurons are excited.

Papadimitriou describes this as a quite uncomplicated mathematical model that is based on “the three main forces of life.”

Along with a group of scientists from distinct academic institutions, Papadimitriou detailed this model in a paper published last year in the peer-reviewed scientific journal Proceedings of the National Academy of Sciences. Assemblies have been the essential element of the model and enabled what the scientists known as “assembly calculus,” a set of operations that can allow the processing, storing, and retrieval of data.

“The operations are not just pulled out of thin air. I believe these operations are real,” Papadimitriou stated. “We can prove mathematically and validate by simulations that these operations correspond to true behaviors… these operations correspond to behaviors that have been observed [in the brain].”

Papadimitriou and his colleagues hypothesize that assemblies and assembly calculus are the right model that clarify cognitive functions of the brain such as reasoning, arranging, and language.

“Much of cognition could fit that,” Papadimitriou stated in his speak at the Google deep finding out conference.

Natural language processing with assembly calculus

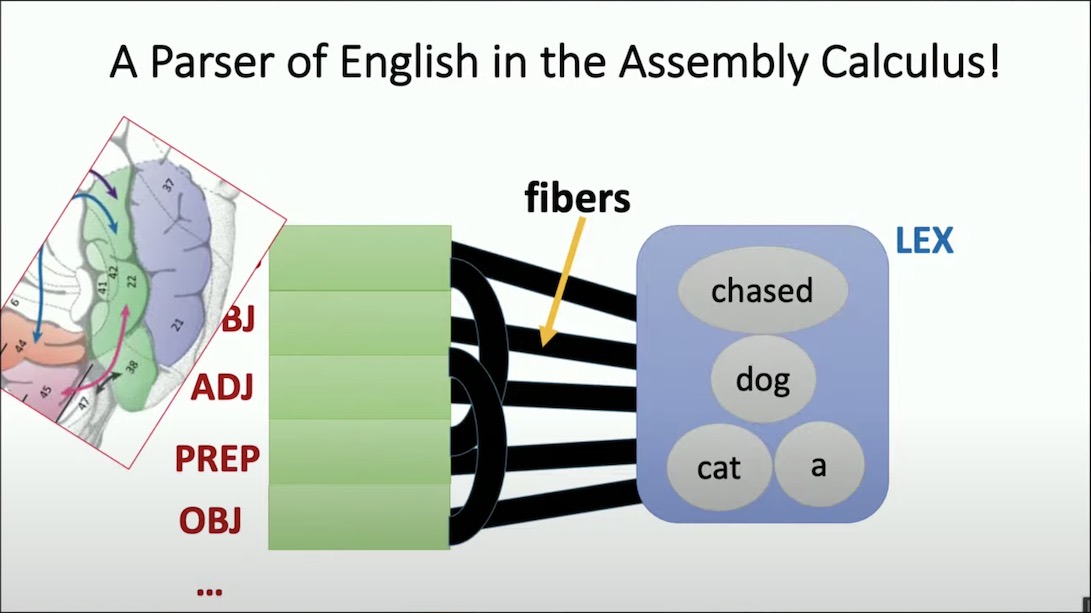

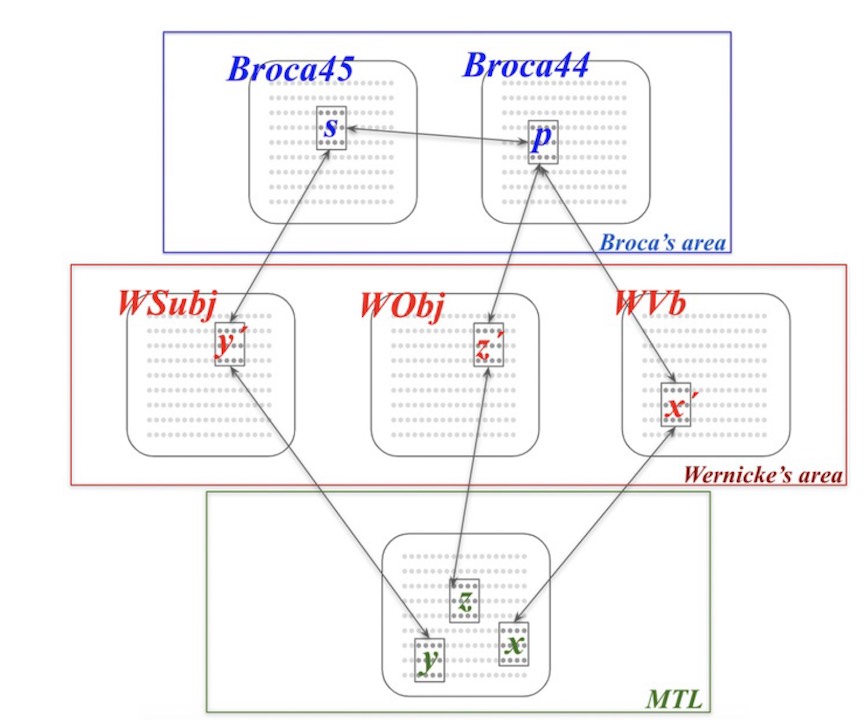

To test their model of the thoughts, Papadimitriou and his colleagues attempted implementing a all-natural language processing program that utilizes assembly calculus to parse English sentences. In impact, they have been attempting to make an artificial intelligence program that simulates locations of the brain that property the assemblies that correspond to lexicon and language understanding.

“What happens is that if a sequence of words excites these assemblies in lex, this engine is going to produce a parse of the sentence,” Papadimitriou stated.

The program performs exclusively via simulated neuron spikes (as the brain does), and these spikes are triggered by assembly calculus operations. The assemblies correspond to locations in the medial temporal lobe, Wernicke’s region, and Broca’s region, 3 components of the brain that are hugely engaged in language processing. The model receives a sequence of words and produces a syntax tree. And their experiments show that in terms of speed and frequency of neuron spikes, their model’s activity corresponds quite closely to what occurs in the brain.

The AI model is nevertheless quite rudimentary and is missing a lot of crucial components of language, Papadimitriou acknowledges. The researchers are working on plans to fill the linguistic gaps that exist. But they think that all these pieces can be added with assembly calculus, a hypothesis that will will need to pass the test of time.

“Can this be the neural basis of language? Are we all born with such a thing in [the left hemisphere of our brain],” Papadimitriou asked. There are nevertheless a lot of inquiries about how language performs in the human thoughts and how it relates to other cognitive functions. But Papadimitriou believes that the assembly model brings us closer to understanding these functions and answering the remaining inquiries.

Language parsing is just one way to test the assembly calculus theory. Papadimitriou and his collaborators are working on other applications, such as finding out and arranging in the way that children do at a quite young age.

“The hypothesis is that the assembly calculus—or something like it—fills the bill for access logic,” Papadimitriou stated. “In other words, it is a useful abstraction of the way our brain does computation.”

Ben Dickson is a software program engineer and the founder of TechTalks. He writes about technologies, company, and politics.

This story initially appeared on Bdtechtalks.com. Copyright 2021