Join Transform 2021 this July 12-16. Register for the AI occasion of the year.

Since the early years of artificial intelligence, scientists have dreamed of generating computer systems that can “see” the world. As vision plays a important function in several items we do just about every day, cracking the code of computer vision seemed to be one of the main methods toward establishing artificial basic intelligence.

But like several other ambitions in AI, personal computer vision has confirmed to be less difficult mentioned than performed. In 1966, scientists at MIT launched “The Summer Vision Project,” a two-month work to produce a personal computer program that could recognize objects and background regions in pictures. But it took substantially more than a summer season break to attain these ambitions. In reality, it wasn’t till the early 2010s that image classifiers and object detectors have been versatile and trustworthy sufficient to be utilised in mainstream applications.

In the previous decades, advances in machine learning and neuroscience have helped make excellent strides in personal computer vision. But we nonetheless have a extended way to go prior to we can construct AI systems that see the world as we do.

Biological and Computer Vision, a book by Harvard Medical University Professor Gabriel Kreiman, offers an accessible account of how humans and animals approach visual information and how far we’ve come toward replicating these functions in computer systems.

Kreiman’s book assists have an understanding of the variations among biological and personal computer vision. The book information how billions of years of evolution have equipped us with a difficult visual processing program, and how studying it has helped inspire far better personal computer vision algorithms. Kreiman also discusses what separates modern personal computer vision systems from their biological counterpart.

While I would advocate a complete study of Biological and Computer Vision to anybody who is interested in the field, I’ve attempted right here (with some assist from Gabriel himself) to lay out some of my important takeaways from the book.

Hardware variations

In the introduction to Biological and Computer Vision, Kreiman writes, “I am particularly excited about connecting biological and computational circuits. Biological vision is the product of millions of years of evolution. There is no reason to reinvent the wheel when developing computational models. We can learn from how biology solves vision problems and use the solutions as inspiration to build better algorithms.”

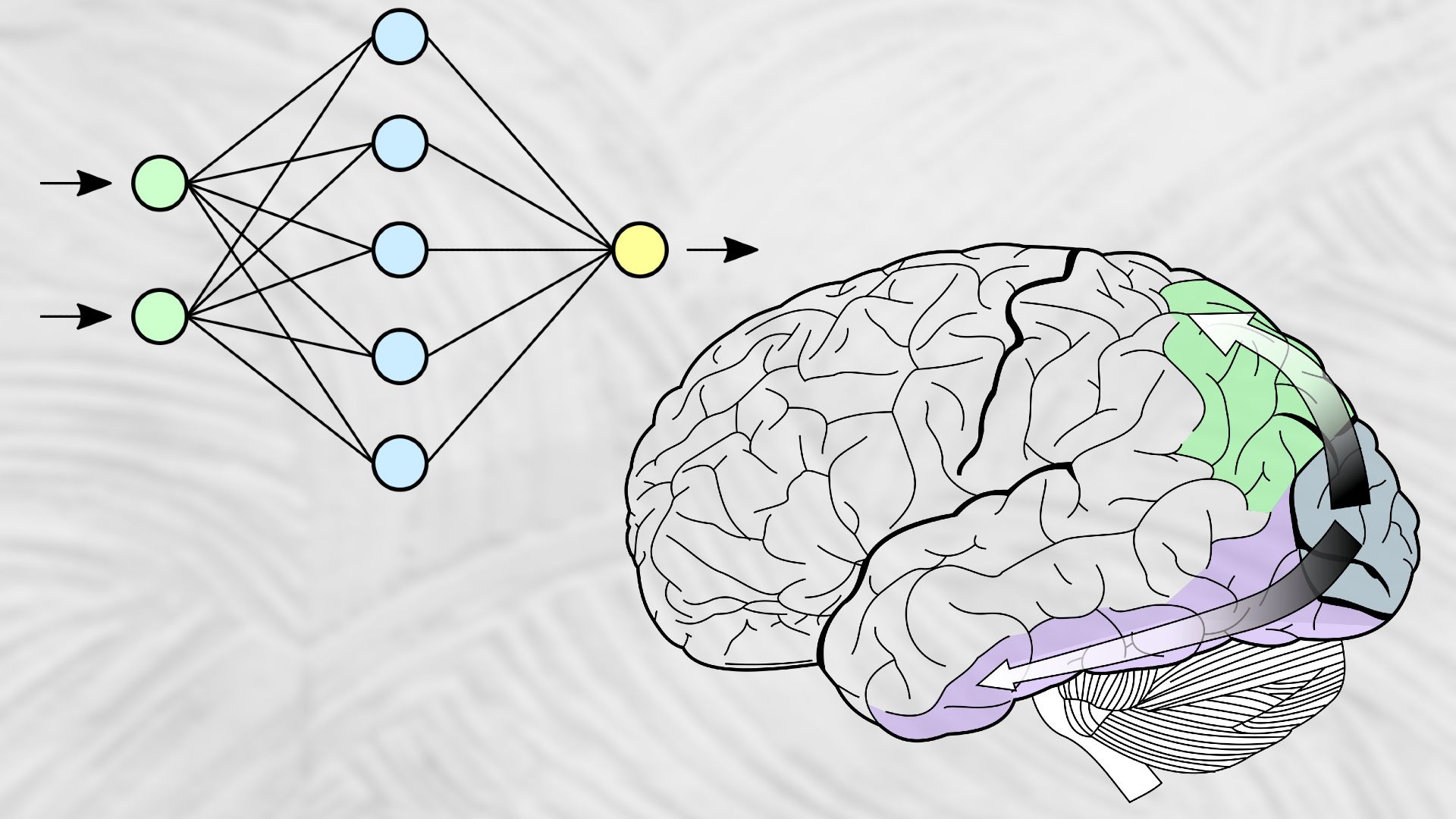

And certainly, the study of the visual cortex has been a excellent supply of inspiration for personal computer vision and AI. But prior to becoming capable to digitize vision, scientists had to overcome the massive hardware gap among biological and personal computer vision. Biological vision runs on an interconnected network of cortical cells and organic neurons. Computer vision, on the other hand, runs on electronic chips composed of transistors.

Therefore, a theory of vision ought to be defined at a level that can be implemented in computer systems in a way that is comparable to living beings. Kreiman calls this the “Goldilocks resolution,” a level of abstraction that is neither as well detailed nor as well simplified.

For instance, early efforts in personal computer vision attempted to tackle personal computer vision at a quite abstract level, in a way that ignored how human and animal brains recognize visual patterns. Those approaches have confirmed to be quite brittle and inefficient. On the other hand, studying and simulating brains at the molecular level would prove to be computationally inefficient.

“I am not a big fan of what I call ‘copying biology,’” Kreiman told TechTalks. “There are many aspects of biology that can and should be abstracted away. We probably do not need units with 20,000 proteins and a cytoplasm and complex dendritic geometries. That would be too much biological detail. On the other hand, we cannot merely study behavior—that is not enough detail.”

In Biological and Computer Vision, Kreiman defines the Goldilocks scale of neocortical circuits as neuronal activities per millisecond. Advances in neuroscience and health-related technologies have made it probable to study the activities of person neurons at millisecond time granularity.

And the benefits of these research have helped create distinct varieties of artificial neural networks, AI algorithms that loosely simulate the workings of cortical regions of the mammal brain. In current years, neural networks have confirmed to be the most effective algorithm for pattern recognition in visual information and have come to be the important element of many computer vision applications.

Architecture variations

The current decades have seen a slew of revolutionary work in the field of deep studying, which has helped computer systems mimic some of the functions of biological vision. Convolutional layers, inspired by research made on the animal visual cortex, are quite effective at locating patterns in visual information. Pooling layers assist generalize the output of a convolutional layer and make it much less sensitive to the displacement of visual patterns. Stacked on top rated of each and every other, blocks of convolutional and pooling layers can go from locating little patterns (corners, edges, and so forth.) to complicated objects (faces, chairs, vehicles, and so forth.).

But there’s nonetheless a mismatch among the higher-level architecture of artificial neural networks and what we know about the mammal visual cortex.

“The word ‘layers’ is, unfortunately, a bit ambiguous,” Kreiman mentioned. “In computer science, people use layers to connote the different processing stages (and a layer is mostly analogous to a brain area). In biology, each brain region contains six cortical layers (and subdivisions). My hunch is that six-layer structure (the connectivity of which is sometimes referred to as a canonical microcircuit) is quite crucial. It remains unclear what aspects of this circuitry should we include in neural networks. Some may argue that aspects of the six-layer motif are already incorporated (e.g. normalization operations). But there is probably enormous richness missing.”

Also, as Kreiman highlights in Biological and Computer Vision, facts in the brain moves in various directions. Light signals move from the retina to the inferior temporal cortex to the V1, V2, and other layers of the visual cortex. But each and every layer also offers feedback to its predecessors. And inside each and every layer, neurons interact and pass facts among each and every other. All these interactions and interconnections assist the brain fill in the gaps in visual input and make inferences when it has incomplete facts.

In contrast, in artificial neural networks, information commonly moves in a single path. Convolutional neural networks are “feedforward networks,” which signifies facts only goes from the input layer to the greater and output layers.

There’s a feedback mechanism named “backpropagation,” which assists appropriate blunders and tune the parameters of neural networks. But backpropagation is computationally costly and only utilised in the course of the instruction of neural networks. And it is not clear if backpropagation straight corresponds to the feedback mechanisms of cortical layers.

On the other hand, recurrent neural networks, which combine the output of greater layers into the input of their prior layers, nonetheless have restricted use in personal computer vision.

In our conversation, Kreiman recommended that lateral and top rated-down flow of facts can be vital to bringing artificial neural networks to their biological counterparts.

“Horizontal connections (i.e., connections for units within a layer) may be critical for certain computations such as pattern completion,” he mentioned. “Top-down connections (i.e., connections from units in a layer to units in a layer below) are probably essential to make predictions, for attention, to incorporate contextual information, etc.”

He also mentioned out that neurons have “complex temporal integrative properties that are missing in current networks.”

Goal variations

Evolution has managed to create a neural architecture that can achieve several tasks. Several research have shown that our visual system can dynamically tune its sensitivities to the frequent. Creating personal computer vision systems that have this type of flexibility remains a main challenge, on the other hand.

Current personal computer vision systems are made to achieve a single activity. We have neural networks that can classify objects, localize objects, segment pictures into distinct objects, describe pictures, create pictures, and more. But each and every neural network can achieve a single activity alone.

“A central issue is to understand ‘visual routines,’ a term coined by Shimon Ullman; how can we flexibly route visual information in a task-dependent manner?” Kreiman mentioned. “You can essentially answer an infinite number of questions on an image. You don’t just label objects, you can count objects, you can describe their colors, their interactions, their sizes, etc. We can build networks to do each of these things, but we do not have networks that can do all of these things simultaneously. There are interesting approaches to this via question/answering systems, but these algorithms, exciting as they are, remain rather primitive, especially in comparison with human performance.”

Integration variations

In humans and animals, vision is closely associated to smell, touch, and hearing senses. The visual, auditory, somatosensory, and olfactory cortices interact and choose up cues from each and every other to adjust their inferences of the world. In AI systems, on the other hand, each and every of these items exists separately.

Do we have to have this type of integration to make far better personal computer vision systems?

“As scientists, we often like to divide problems to conquer them,” Kreiman mentioned. “I personally think that this is a reasonable way to start. We can see very well without smell or hearing. Consider a Chaplin movie (and remove all the minimal music and text). You can understand a lot. If a person is born deaf, they can still see very well. Sure, there are lots of examples of interesting interactions across modalities, but mostly I think that we will make lots of progress with this simplification.”

However, a more difficult matter is the integration of vision with more complicated regions of the brain. In humans, vision is deeply integrated with other brain functions such as logic, reasoning, language, and frequent sense information.

“Some (most?) visual problems may ‘cost’ more time and require integrating visual inputs with existing knowledge about the world,” Kreiman mentioned.

He pointed to following image of former U.S. president Barack Obama as an instance.

To have an understanding of what is going on in this image, an AI agent would have to have to know what the particular person on the scale is undertaking, what Obama is undertaking, who is laughing and why they are laughing, and so forth. Answering these inquiries demands a wealth of facts, such as world information (scales measure weight), physics information (a foot on a scale exerts a force), psychological information (several individuals are self-conscious about their weight and would be shocked if their weight is properly above the usual), social understanding (some individuals are in on the joke, some are not).

“No current architecture can do this. All of this will require dynamics (we do not appreciate all of this immediately and usually use many fixations to understand the image) and integration of top-down signals,” Kreiman mentioned.

Areas such as language and frequent sense are themselves excellent challenges for the AI neighborhood. But it remains to be seen regardless of whether they can be solved separately and integrated collectively along with vision, or integration itself is the important to solving all of them.

“At some point we need to get into all of these other aspects of cognition, and it is hard to imagine how to integrate cognition without any reference to language and logic,” Kreiman mentioned. “I expect that there will be major exciting efforts in the years to come incorporating more of language and logic in vision models (and conversely incorporating vision into language models as well).”

Ben Dickson is a software program engineer and the founder of TechTalks. He writes about technologies, business enterprise, and politics.