Join Transform 2021 this July 12-16. Register for the AI occasion of the year.

AI-powered speech transcription platforms are a dime a dozen in a marketplace estimated to be worth more than $1.6 billion. Deepgram and Otter.ai make voice recognition models for cloud-based true-time processing even though Verbit presents tech not in contrast to that of Oto, which combines intonation with acoustic information to bolster speech understanding. Amazon, Google, Facebook, and Microsoft also give their personal speech transcription services.

But a new entrant this week launching out of beta claims to take an strategy that yields superior accuracy. Called Soniox, the firm leverages vast amounts of unlabeled audio and text to teach its algorithms to recognize speech with accents, background noises, and “fairfield” recording. In practice, Soniox says that its method properly transcribes 24% more words compared with other speech-to-text systems, attaining “super-human” recognition on “most domains of human knowledge.”

Those are bold claims, but Soniox founder and CEO Klemen Simonic says the accuracy improvements arise from the platform’s unsupervised studying strategies. With unsupervised studying, an algorithm — in Soniox’s case, a speech recognition algorithm — is fed “unknown” information for which no previously defined labels exist. The method ought to teach itself to classify the information, processing the information to discover from its structure.

Unsupervised speech

At the advent of the contemporary AI era, when it was found that effective hardware and datasets could yield robust predictive final results, the dominant kind of machine studying fell into a category recognized as supervised studying. Supervised studying is defined by its use of labeled datasets to train algorithms to classify information, predict outcomes, and more.

Simonic, a former Facebook researcher and engineer who helped to make the speech group at the social network, notes that supervised studying in text-to-speech is each time-consuming and high priced. Companies have to receive tens of thousands of hours of audio and recruit human teams to manually transcribe the information. And this identical method has to be repeated for every language.

“Google and Facebook have more than 50,000 hours of transcribed audio. One has to invest millions — more like tens of millions — of dollars into collecting transcribed data,” Simonic told VentureBeat through e mail. “Only then one can train a speech recognition AI on the transcribed data.”

A approach recognized as semi-supervised studying presents a prospective resolution. It can accept partially-labeled information, and Google not too long ago utilised it to receive state-of-the-art final results in speech recognition. In the absence of labels, even so, unsupervised studying — also recognized as self-supervised studying — is the only way to fill gaps in understanding.

According to Simonic, Soniox’s self-supervised studying pipeline sources audio and text from the net. In the very first iteration of education, the firm utilised the Librispeech dataset, which consists of 960 hours of transcribed audiobooks.

Soniox’s iterative strategy constantly refines the platform’s algorithms, enabling them to recognize more words as the method gains access to more information. Currently, Soniox’s vocabulary spans diverse folks, locations, and geography to domains which includes education, technologies, engineering, medicine, well being, law, science, art, history, meals, sports, and more.

“To do fine-tuning of a particular model on a particular dataset, you would need an actual transcribed audio dataset. We do not require transcribed audio data to train our speech AI. We do not do fine-tuning,” Simonic stated.

Dataset and infrastructure

Soniox claims to have a proprietary dataset containing more than 88,000 hours of audio and 6.6 billion words of preprocessed text. By comparison, the most recent speech recognition performs from Facebook and Microsoft utilised in between 13,one hundred and 65,000 hours of labeled and transcribed speech information. And Mozilla’s Common Voice, one of the biggest public annotated voice corpora, has 9,000 hours of recordings.

While somewhat underexplored in the speech domain, a expanding body of study demonstrates the prospective of studying from unlabeled information. Microsoft is employing unsupervised studying to extract understanding about disruptions to its cloud services. More not too long ago, Facebook announced SEER, an unsupervised model educated on a billion pictures that ostensibly achieves state-of-the-art final results on a variety of personal computer vision benchmarks.

Soniox collects more information on a weekly basis, with the aim of expanding the variety of vocabulary that the platform can transcribe. However, Simonic points out that more audio and text is not necessarily essential to enhance word accuracy. Soniox’s algorithms can “extract” more about familiar words with several iterations, primarily studying to recognize distinct words greater than just before.

Image Credit: Soniox

AI has a nicely-recognized bias challenge, and unsupervised studying does not eradicate the prospective for bias in a system’s predictions. For instance, unsupervised personal computer vision systems can choose up racial and gender stereotypes present in education datasets. Simonic says that Soniox has taken care to guarantee its audio information is “extremely diverse,” with speakers from most nations and accents about the world represented. He admits that the information distribution across accents is not balanced but claims that the method nonetheless manages to carry out “extremely well” with diverse speakers.

Soniox also constructed its personal education hardware infrastructure, which it retailers across several servers situated in a collocation datacenter facility. Simonic says the company’s engineering group installed and optimized the method and machine studying frameworks and wrote the inference engine from scratch.

“It is utterly important to have under control every single bit of transfer and computation when you are training AI models at large scale. You need a rather large amount of computation to do just one iteration over a dataset of more than 88,000 hours,” Simonic stated. “[The inferencing engine] is highly optimized and can potentially run on any hardware. This is super important for production deployment, because speech recognition is computationally expensive to run compared to most other AI models and saving every bit of computation on a large volume amounts to large sums in savings — think of million of hours of audio and video per month.”

Scaling up

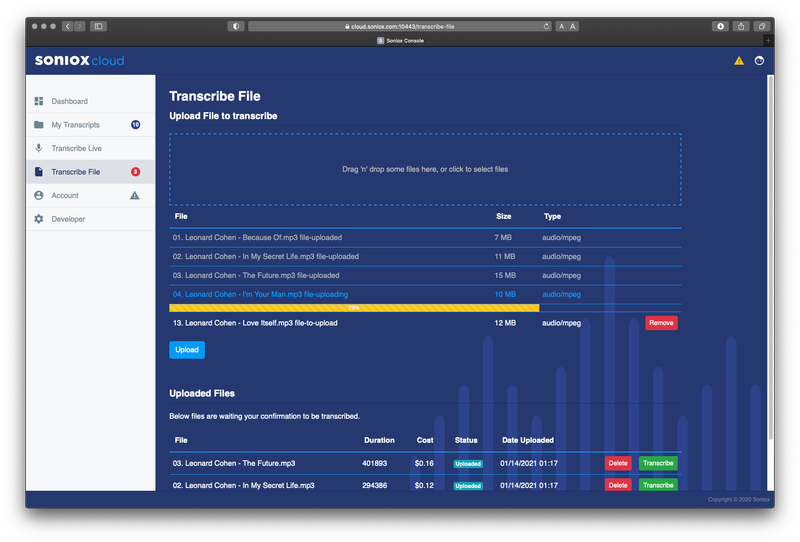

After launching in beta earlier this year, Soniox is creating its platform normally obtainable. New customers get 5 hours per month of no cost speech recognition, which can be utilised in Soniox’s net or iOS app to record live audio from a microphone or upload and transcribe files. Soniox presents an limitless quantity of no cost recognition sessions for up to 30 seconds per session, and developers can use the hours to transcribe audio by way of the Soniox API.

It’s early days, but Soniox says it not too long ago signed its very first client in DeepScribe, a transcription startup targeting well being care. DeepScribe switched from a Google speech-to-text model simply because Soniox’s transcriptions of physician-patient conversations have been more precise, Simonic claims.

“To make a business, developing novel technology is not enough. Thus we developed services and products around our new speech recognition technology,” Simonic stated. “I expect there will be a lot more customers like DeepScribe once the word about Soniox gets around.”