The Transform Technology Summits begin October 13th with Low-Code/No Code: Enabling Enterprise Agility. Register now!

The human hand is one of the fascinating creations of nature, and one of the hugely sought ambitions of artificial intelligence and robotics researchers. A robotic hand that could manipulate objects as we do would be enormously beneficial in factories, warehouses, offices, and houses.

Yet in spite of tremendous progress in the field, analysis on robotics hands remains very high priced and restricted to a handful of pretty wealthy corporations and analysis labs.

Now, new analysis promises to make robotics analysis out there to resource-constrained organizations. In a paper published on arXiv, researchers at the University of Toronto, Nvidia, and other organizations have presented a new technique that leverages hugely effective deep reinforcement studying procedures and optimized simulated environments to train robotic hands at a fraction of the fees it would generally take.

Training robotic hands is high priced

For all we know, the technologies to make human-like robots is not right here however. However, offered adequate sources and time, you can make substantial progress on precise tasks such as manipulating objects with a robotic hand.

In 2019, OpenAI presented Dactyl, a robotic hand that could manipulate a Rubik’s cube with impressive dexterity (although nonetheless drastically inferior to human dexterity). But it took 13,000 years’ worth of coaching to get it to the point exactly where it could manage objects reliably.

How do you match 13,000 years of coaching into a brief period of time? Fortunately, several application tasks can be parallelized. You can train several reinforcement studying agents concurrently and merge their discovered parameters. Parallelization can assistance to decrease the time it requires to train the AI that controls the robotic hand.

However, speed comes at a expense. One answer is to make thousands of physical robotic hands and train them simultaneously, a path that would be financially prohibitive even for the wealthiest tech corporations. Another answer is to use a simulated atmosphere. With simulated environments, researchers can train hundreds of AI agents at the similar time, and then finetune the model on a true physical robot. The mixture of simulation and physical coaching has turn into the norm in robotics, autonomous driving, and other places of analysis that need interactions with the true world.

Simulations have their personal challenges, nevertheless, and the computational fees can nonetheless be also substantially for smaller sized firms.

OpenAI, which has the economic backing of some of the wealthiest corporations and investors, created Dactyl employing high priced robotic hands and an even more high priced compute cluster comprising about 30,000 CPU cores.

Lowering the fees of robotics analysis

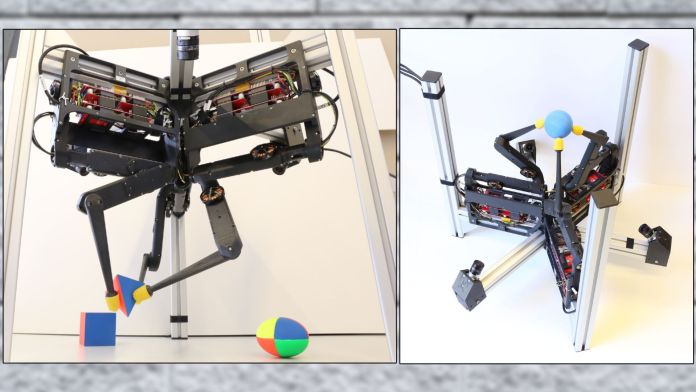

In 2020, a group of researchers at the Max Planck Institute for Intelligent Systems and New York University proposed an open-supply robotic analysis platform that was dynamic and applied cost-effective hardware. Named TriFinger, the technique applied the PyBullet physics engine for simulated studying and a low-expense robotic hand with 3 fingers and six degrees of freedom (6DoF). The researchers later launched the Real Robot Challenge (RRC), a Europe-based platform that gave researchers remote access to physical robots to test their reinforcement studying models on.

The TriFinger platform lowered the fees of robotic analysis but nonetheless had various challenges. PyBullet, which is a CPU-based atmosphere, is noisy and slow and tends to make it tough to train reinforcement studying models effectively. Poor simulated studying creates complications and widens the “sim2real gap,” the efficiency drop that the educated RL model suffers from when transferred to a physical robot. Consequently, robotics researchers have to have to go via several cycles of switching among simulated coaching and physical testing to tune their RL models.

“Previous work on in-hand manipulation required large clusters of CPUs to run on. Furthermore, the engineering effort required to scale reinforcement learning methods has been prohibitive for most research teams,” Arthur Allshire, lead author of the paper and a Simulation and Robotics Intern at Nvidia, told TechTalks. “This meant that despite progress in scaling deep RL, further algorithmic or systems progress has been difficult. And the hardware cost and maintenance time associated with systems such as the Shadow Hand [used in OpenAI Dactyl] … has limited the accessibility of hardware to test learning algorithms on.”

Building on best of the work of the TriFinger group, this new group of researchers aimed to enhance the high-quality of simulated studying though maintaining the fees low.

Training RL agents with single-GPU simulation

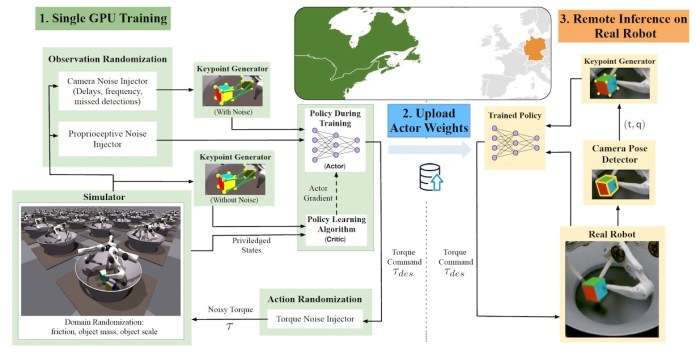

The researchers replaced the PyBullet with Nvidia’s Isaac Gym, a simulated atmosphere that can run effectively on desktop-grade GPUs. Isaac Gym leverages Nvidia’s PhysX GPU-accelerated engine to let thousands of parallel simulations on a single GPU. It can provide about one hundred,000 samples per second on an RTX 3090 GPU.

“Our task is suitable for resource-constrained research labs. Our method took one day to train on a single desktop-level GPU and CPU. Every academic lab working in machine learning has access to this level of resources,” Allshire mentioned.

According to the paper, an complete setup to run the technique, which includes coaching, inference, and physical robot hardware, can be bought for much less than $10,000.

The efficiency of the GPU-powered virtual atmosphere enabled the researchers to train their reinforcement studying models in a higher-fidelity simulation with out lowering the speed of the coaching approach. Higher fidelity tends to make the coaching atmosphere more realistic, lowering the sim2real gap and the have to have for finetuning the model with physical robots.

The researchers applied a sample object manipulation process to test their reinforcement studying technique. As input, the RL model receives proprioceptive information from the simulated robot along with eight keypoints that represent the pose of the target object in 3-dimensional Euclidean space. The model’s output is the torques that are applied to the motors of the robot’s nine joints.

The technique makes use of the Proximal Policy Optimization (PPO), a model-free of charge RL algorithm. Model-free of charge algorithms obviate the have to have to compute all the specifics of the atmosphere, which is computationally pretty high priced, particularly when you are dealing with the physical world. AI researchers usually seek expense-effective, model-free of charge options to their reinforcement studying troubles.

The researchers created the reward of robotic hand RL as a balance among the fingers’ distance from the object, the object’s location place, and the intended pose.

To additional enhance the model’s robustness, the researchers added random noise to various components of the atmosphere in the course of coaching.

Testing on true robots

Once the reinforcement studying technique was educated in the simulated atmosphere, the researchers tested it in the true world via remote access to the TriFinger robots offered by the Real Robot Challenge. They replaced the proprioceptive and image input of the simulator with the sensor and camera details offered by the remote robot lab.

The educated technique transferred its skills to the true robot a seven-% drop in accuracy, an impressive sim2real gap improvement in comparison to prior solutions.

The keypoint-based object tracking was particularly beneficial in guaranteeing that the robot’s object-handling capabilities generalized across various scales, poses, situations, and objects.

“One limitation of our method — deploying on a cluster we did not have direct physical access to — was the difficulty in trying other objects. However, we were able to try other objects in simulation and our policies proved relatively robust with zero-shot transfer performance from the cube,” Allshire mentioned.

The researchers say that the similar approach can work on robotic hands with more degrees of freedom. They did not have the physical robot to measure the sim2real gap, but the Isaac Gym simulator also involves complicated robotic hands such as the Shadow Hand applied in Dactyl.

This technique can be integrated with other reinforcement studying systems that address other elements of robotics, such as navigation and pathfinding, to type a more full answer to train mobile robots. “For example, you could have our method controlling the low-level control of a gripper while higher level planners or even learning-based algorithms are able to operate at a higher level of abstraction,” Allshire mentioned.

The researchers think that their work presents “a path for democratization of robot learning and a viable solution through large scale simulation and robotics-as-a-service.”

Ben Dickson is a application engineer and the founder of TechTalks. He writes about technologies, enterprise, and politics.

This story initially appeared on Bdtechtalks.com. Copyright 2021