The Transform Technology Summits start off October 13th with Low-Code/No Code: Enabling Enterprise Agility. Register now!

Microsoft and Nvidia today announced that they educated what they claim is the biggest and most capable AI-powered language model to date: Megatron-Turing Natural Language Generation (MT-NLP). The successor to the companies’ Turing NLG 17B and Megatron-LM models, MT-NLP includes 530 billion parameters and achieves “unmatched” accuracy in a broad set of all-natural language tasks, Microsoft and Nvidia say — like reading comprehension, commonsense reasoning, and all-natural language inferences.

“The quality and results that we have obtained today are a big step forward in the journey towards unlocking the full promise of AI in natural language. The innovations of DeepSpeed and Megatron-LM will benefit existing and future AI model development and make large AI models cheaper and faster to train,” Nvidia’s senior director of item management and marketing and advertising for accelerated computing, Paresh Kharya, and group plan manager for the Microsoft Turing group, Ali Alvi wrote in a weblog post. “We look forward to how MT-NLG will shape tomorrow’s products and motivate the community to push the boundaries of natural language processing (NLP) even further. The journey is long and far from complete, but we are excited by what is possible and what lies ahead.”

Training enormous language models

In machine finding out, parameters are the aspect of the model that is discovered from historical instruction information. Generally speaking, in the language domain, the correlation amongst the quantity of parameters and sophistication has held up remarkably properly. Language models with massive numbers of parameters, more information, and more instruction time have been shown to obtain a richer, more nuanced understanding of language, for instance gaining the capacity to summarize books and even full programming code.

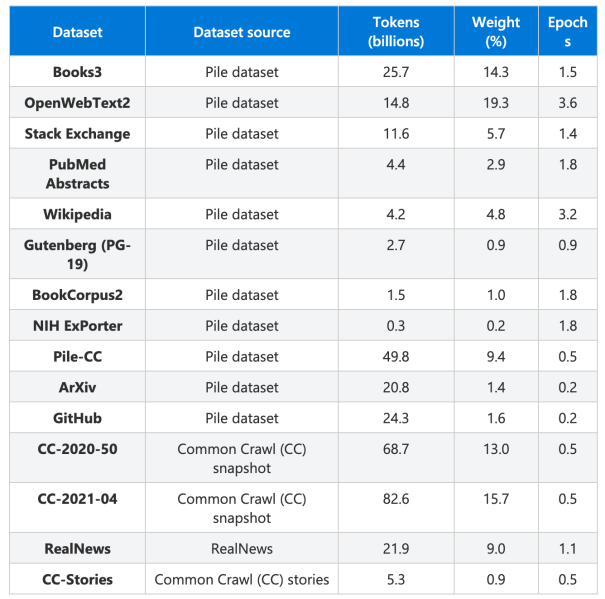

To train MT-NLG, Microsoft and Nvidia say that they made a instruction dataset with 270 billion tokens from English-language internet sites. Tokens, a way of separating pieces of text into smaller sized units in all-natural language, can either be words, characters, or components of words. Like all AI models, MT-NLP had to “train” by ingesting a set of examples to understand patterns amongst information points, like grammatical and syntactical guidelines.

The dataset largely came from The Pile, an 835GB collection of 22 smaller sized datasets made by the open supply AI investigation work EleutherAI. The Pile spans academic sources (e.g., Arxiv, PubMed), communities (StackExchange, Wikipedia), code repositories (Github), and more, which Microsoft and Nvidia say they curated and combined with filtered snapshots of the Common Crawl, a massive collection of webpages like news stories and social media posts.

Training took spot across 560 Nvidia DGX A100 servers, each and every containing 8 Nvidia A100 80GB GPUs.

When benchmarked, Microsoft says that MT-NLP can infer fundamental mathematical operations even when the symbols are “badly obfuscated.” While not incredibly precise, the model appears to go beyond memorization for arithmetic and manages to full tasks containing inquiries that prompt it for an answer, a important challenge in NLP.

It’s properly-established that models like MT-NLP can amplify the biases in information on which they have been educated, and certainly, Microsoft and Nvidia acknowledge that the model “picks up stereotypes and biases from the [training] data.” That’s most likely for the reason that a portion of the dataset was sourced from communities with pervasive gender, race, physical, and religious prejudices, which curation cannot totally address.

In a paper, the Middlebury Institute of International Studies’ Center on Terrorism, Extremism, and Counterterrorism claim that GPT-3 and equivalent models can produce “informational” and “influential” text that may possibly radicalize individuals into far-appropriate extremist ideologies and behaviors. A group at Georgetown University has employed GPT-3 to produce misinformation, like stories about a false narrative, articles altered to push a bogus point of view, and tweets riffing on unique points of disinformation. Other research, like one published by Intel, MIT, and Canadian AI initiative CIFAR researchers in April, have located higher levels of stereotypical bias from some of the most well-known open supply models, like Google’s BERT, XLNet, and Facebook’s RoBERTa.

Microsoft trains a 530billion parameter GPT3-style language model. This is the biggest LM in existence. (There’s also the mysterious multi-modal 1.5trillion+ ‘Wu Dao’ MOE model but tiny recognized about it). Microsoft trains on ‘The Pile’ dataset. https://t.co/md03QzqlxA

— Jack Clark (@jackclarkSF) October 11, 2021

Microsoft and Nvidia claim that they’re “committed to working on addressing [the] problem” and encourage “continued research to help in quantifying the bias of the model.” They also say that any use of Megatron-Turing in production “must ensure that proper measures are put in place to mitigate and minimize potential harm to users,” and adhere to tenets such as these outlined in Microsoft’s Responsible AI Principles.

“We live in a time [when] AI advancements are far outpacing Moore’s law. We continue to see more computation power being made available with newer generations of GPUs, interconnected at lightning speeds. At the same time, we continue to see hyper-scaling of AI models leading to better performance, with seemingly no end in sight,” Kharya and Alvi continued. “Marrying these two trends together are software innovations that push the boundaries of optimization and efficiency.”

The expense of massive models

Projects like MT-NLP, AI21 Labs’ Jurassic-1, Huawei’s PanGu-Alpha, Naver’s HyperCLOVA, and the Beijing Academy of Artificial Intelligence’s Wu Dao 2. are impressive from an academic standpoint, but developing them does not come inexpensive. For instance, the instruction dataset for OpenAI’s GPT-3 — one of the world’s biggest language models — was 45 terabytes in size, adequate to fill 90 500GB really hard drives.

AI instruction charges dropped one hundred-fold amongst 2017 and 2019, according to one supply, but the totals nonetheless exceed the compute budgets of most startups. The inequity favors corporations with extraordinary access to sources at the expense of modest-time entrepreneurs, cementing incumbent positive aspects.

For instance, OpenAI’s GPT-3 necessary an estimated 3.1423^23 floating-point operations per second (FLOPS) of compute throughout instruction. In computer system science, FLOPS is a measure of raw processing functionality, normally used to examine various kinds of hardware. Assuming OpenAI reserved 28 teraflops — 28 trillion floating-point operations per second — of compute across a bank of Nvidia V100 GPUs, a widespread GPU readily available by way of cloud services, it’d take $4.6 million for a single instruction run. One Nvidia RTX 8000 GPU with 15 teraflops of compute would be substantially less expensive — but it’d take 665 years to finish the instruction.

Microsoft and Nvidia says that it observed amongst 113 to 126 teraflops per second per GPU though instruction MT-NLP. The expense is most likely to have been in the millions of dollars.

A Synced report estimated that a fake news detection model created by researchers at the University of Washington expense $25,000 to train, and Google spent about $6,912 to train a language model named BERT that it used to strengthen the top quality of Google Search benefits. Storage charges also speedily mount when dealing with datasets at the terabyte — or petabyte — scale. To take an intense instance, one of the datasets accumulated by Tesla’s self-driving group — 1.5 petabytes of video footage — would expense more than $67,500 to shop in Azure for 3 months, according to CrowdStorage.

The effects of AI and machine finding out model training on the environment have also been brought into relief. In June 2020, researchers at the University of Massachusetts at Amherst released a report estimating that the quantity of energy necessary for instruction and browsing a particular model entails the emissions of roughly 626,000 pounds of carbon dioxide, equivalent to practically 5 occasions the lifetime emissions of the typical U.S. car or truck. OpenAI itself has conceded that models like Codex call for substantial amounts of compute — on the order of hundreds of petaflops per day — which contributes to carbon emissions.

In a sliver of fantastic news, the expense for FLOPS and fundamental machine finding out operations has been falling more than the previous handful of years. A 2020 OpenAI survey located that considering the fact that 2012, the quantity of compute required to train a model to the similar functionality on classifying photos in a well-known benchmark — ImageNet — has been decreasing by a aspect of two each and every 16 months. Other current investigation suggests that massive language models are not normally more complicated than smaller sized models, based on the strategies used to train them.

Maria Antoniak, a all-natural language processing researcher and information scientist at Cornell University, says when it comes to all-natural language, it is an open query no matter if bigger models are the appropriate method. While some of the most effective benchmark functionality scores today come from massive datasets and models, the payoff from dumping huge amounts of information into models is uncertain.

“The current structure of the field is task-focused, where the community gathers together to try to solve specific problems on specific datasets,” Antoniak told VentureBeat in a earlier interview. “These tasks are usually very structured and can have their own weaknesses, so while they help our field move forward in some ways, they can also constrain us. Large models perform well on these tasks, but whether these tasks can ultimately lead us to any true language understanding is up for debate.”