Join Transform 2021 this July 12-16. Register for the AI occasion of the year.

The advent of Transformers in 2017 fully changed the world of neural networks. Ever given that, the core idea of Transformers has been remixed, repackaged, and rebundled in various models. The benefits have surpassed the state of the art in various machine finding out benchmarks. In truth, presently all major benchmarks in the field of organic language processing are dominated by Transformer-based models. Some of the Transformer-family models are BERT, ALBERT, and the GPT series of models.

In any machine finding out model, the most vital elements of the coaching approach are:

- The code of the model — the elements of the model and its configuration

- The information to be made use of for coaching

- The out there compute energy

With the Transformer family of models, researchers lastly arrived at a way to improve the overall performance of a model infinitely: You just improve the quantity of coaching information and compute energy.

This is specifically what OpenAI did, initially with GPT-2 and then with GPT-3. Being a properly funded ($1 billion+) firm, it could afford to train some of the greatest models in the world. A private corpus of 500 billion tokens was made use of for coaching the model, and around $50 million was spent in compute fees.

While the code for most of the GPT language models is open supply, the model is not possible to replicate without the need of the huge amounts of information and compute energy. And OpenAI has selected to withhold public access to its educated models, generating them out there by way of API to only a choose handful of corporations and people. Further, its access policy is undocumented, arbitrary, and opaque.

Genesis of GPT-Neo

Stella Biderman, Leo Gao, Sid Black, and other folks formed EleutherAI with the thought of generating AI technologies that would be open supply to the world. One of the initially complications the group chose to tackle was generating a GPT-like language model that would be accessible to all.

As pointed out prior to, most of the code for such a model was currently out there, so the core challenges had been to discover the information and the compute energy. The Eleuther group set out to create an open supply information set of a scale comparable to what OpenAI made use of for its GPT language models. This led to the creation of The Pile. The Pile, released in July 2020, is a 825GB information set particularly developed to train language models. It consists of information from 22 diverse sources, like academic sources (Arxiv, PubMed, FreeLaw and so on.), Internet webpages (StackExchange, Wikipedia and so on.), dialogs from subtitles, Github, and so on.

Source: The Pile paper, Arxiv.

Source: The Pile paper, Arxiv.

For compute, EleutherAI was in a position to use idle compute from TPU Research Cloud (TRC). TRC is a Google Cloud initiative that supports analysis projects with the expectation that the benefits of the analysis will be shared with the world by way of open supply code, models, and so on.

On March 22, 2021, right after months of painstaking analysis and coaching, the EleutherAI group released two educated GPT-style language models, GPT-Neo 1.3B and GPT-Neo 2.7B. The code and the educated models are open sourced beneath the MIT license. And the models can be made use of for no cost employing HuggingFace’s Transformers platform.

Comparing GPT-Neo and GPT-3

Let’s evaluate GPT-Neo and GPT-3 with respect to the model size and overall performance benchmarks and lastly look at some examples.

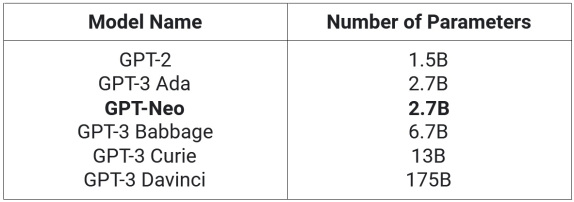

Model size. In terms of model size and compute, the biggest GPT-Neo model consists of 2.7 billion parameters. In comparison, the GPT-3 API provides 4 models, ranging from 2.7 billion parameters to 175 billion parameters.

Caption: GPT-3 parameter sizes as estimated right here, and GPT-Neo as reported by EleutherAI.

As you can see, GPT-Neo is larger than GPT-2 and comparable to the smallest GPT-3 model.

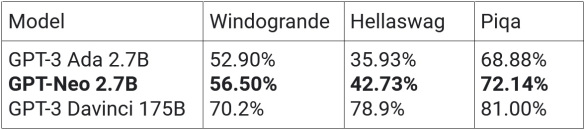

Performance benchmark metrics. EleutherAI reports that GPT-Neo outperformed the closest comparable GPT-3 model (GPT-3 Ada) on all NLP reasoning benchmarks.

GPT-Neo outperformed GPT-3 Ada on Hellaswag and Piqa. Hellaswag is an intelligent multi-decision sentence completion benchmark that has a context paragraph and 4 endings. Piqa measures popular sense reasoning exactly where the machine has to choose one out of two sentences that make the most sense. GPT-Neo also outperformed GPT-3 Ada on Winogrande, a benchmark that makes use of popular sense to resolve ambiguous pronouns in a sentence.

However GPT-3 Davinci, the biggest version of GPT-3, with about 65 occasions as quite a few parameters, comfortably beats GPT-Neo in all the benchmarks, as you would anticipate.

Caption: Model metrics as reported by EleutherAI, except GPT-3 175B, which is from Open AI’s GPT-3 paper.

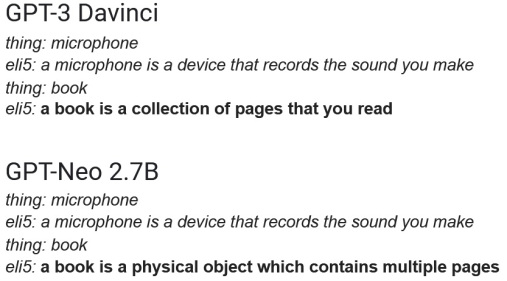

Examples. Let’s look at a handful of side-by-side examples of generated text from the biggest GPT-3 model (from many GPT-3 Davinci examples located on the internet) and GPT-Neo (that I generated employing HuggingFace’s GPT-Neo 2.7B Transformers implementation).

The initially instance we will look at is completion of ELI-5 format sentences, exactly where the text in italics was the prompt provided to the model.

I would say each GPT-Neo and GPT-3 worked equally properly in this instance.

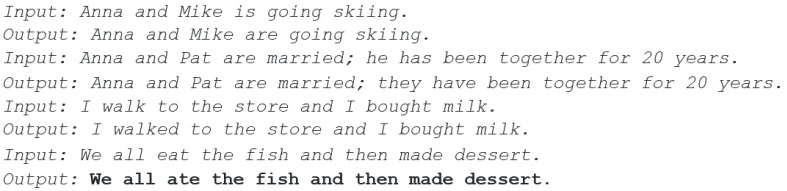

The next experiment is to verify if GPT-Neo can appropriate grammatically incorrect sentences like GPT-3 can. Again the italicized components are the prompt supplied to the model.

Again, GPT-Neo worked fantastic in correcting “eat” to “ate,” particularly thinking about the model was not particularly educated to do this.

Finally, the well-known “discovery of English-speaking unicorns” piece (GPT-2 version right here and GPT-3 version right here) reimagined and written by GPT-Neo. The prompt provided to the model is in italics and bold.

In a shocking acquiring, scientists found a herd of unicorns living in a remote, previously unexplored valley, in the Andes Mountains. Even more surprising to the researchers was the truth that the unicorns spoke ideal English.

The only proof was a single word: “hello.”

“The unicorns are very good listeners, so they learned to speak English from watching humans,” stated Dr. David Orme, chief executive of the Zoological Society of London, in a press release. “In fact, they also learned to speak Spanish from watching humans. They said hello to us in both languages.”

While the discovery is spectacular in and of itself, the news gets even more thoughts-blowing when you think about that the unicorns had been there for thousands of years. They had been “living fossils,” Orme stated, which is a fancy way of saying that their origins have but to be determined.

“It is extremely exciting that we now have evidence of a population of unicorns, that we know to be around and that is living,” Dr. John Fitzpatrick, a conservation and anthropology professor at the University of California, stated in a press release. “This shows that they are not just a myth and that humans are not the only species on Earth to have developed language.”

The findings have been published in a new study in the journal Proceedings of the Royal Society B: Biological Sciences.

A ‘unicorn’ from the Andean mountains in Colombia. (Image: The Royal Society)

The discovery was made this summer season in a remote but spectacular valley in the Andean Mountains in Colombia known as Bureta. It’s believed the unicorns had been in their 20s. “It’s a very unusual place to find these animals and at the moment there is no evidence that humans have been there before,” Orme stated.

The scientists stated the unicorns had been living in that valley as lengthy as their species has, which is estimated at at least 200,000 years.

This signifies the area’s wealthy history of megafauna, like dinosaurs, pterosaurs and saber-toothed cats, is nevertheless far from more than.

“If it is true in a relatively isolated valley near Bureta Colombia that is more than 200,000 years old and now also having a population of these animals, then Bureta is truly a unique and special place,” Fitzpatrick stated.

Once once again, GPT-Neo was in a position to create a coherent, pretty much-believable post without the need of missing out on the central themes — unicorn discovery, the English-speaking aspect, the Andes, and so on.

All in all, the overall performance metrics of GPT-Neo 2.7B in NLP benchmarks is greater than GPT-3 2.7B (Ada), but significantly worse than the GPT-3 175B (Davinci). But qualitatively, GPT-Neo 2.7B’s completions and writing had been as great as even GPT-3 175B (Davinci), the biggest GPT-3 model.

The bottom line right here is: GPT-Neo is a fantastic open supply option to GPT-3, particularly provided OpenAI’s closed access policy.

Abhishek Iyer is the founder of FreeText AI, a firm specializing in text mining and critique evaluation.