All the sessions from Transform 2021 are out there on-demand now. Watch now.

For the improved element of a year, OpenAI’s GPT-3 has remained amongst the biggest AI language models ever designed, if not the biggest of its type. Via an API, individuals have applied it to automatically create emails and articles, summarize text, compose poetry and recipes, generate internet site layouts, and create code for deep mastering in Python. But an AI lab based in Tel Aviv, Israel — AI21 Labs — says it is preparing to release a bigger model and make it out there by means of a service, with the notion becoming to challenge OpenAI’s dominance in the “natural language processing-as-a-service” field.

AI21 Labs, which is advised by Udacity founder Sebastian Thrun, was cofounded in 2017 by Crowdx founder Ori Goshen, Stanford University professor Yoav Shoham, and Mobileye CEO Amnon Shashua. The startup says that the biggest version of its model — known as Jurassic-1 Jumbo — includes 178 billion parameters, or 3 billion more than GPT-3 (but not more than PanGu-Alpha, HyperCLOVA, or Wu Dao 2.). In machine mastering, parameters are the element of the model that is discovered from historical education information. Generally speaking, in the language domain, the correlation involving the quantity of parameters and sophistication has held up remarkably properly.

AI21 Labs claims that Jurassic-1 can recognize 250,000 lexical products like expressions, words, and phrases, generating it larger than most current models like GPT-3, which has a 50,000-item vocabulary. The corporation also claims that Jurassic-1 Jumbo’s vocabulary is amongst the initial to span “multi-word” products like named entities — “The Empire State Building,” for instance — which means that the model may well have a richer semantic representation of ideas that make sense to humans.

“AI21 Labs was founded to fundamentally change and improve the way people read and write. Pushing the frontier of language-based AI requires more than just pattern recognition of the sort offered by current deep language models,” CEO Shoham told VentureBeat by means of e mail.

Scaling up

The Jurassic-1 models will be out there by means of AI21 Labs’ Studio platform, which lets developers experiment with the model in open beta to prototype applications like virtual agents and chatbots. Should developers want to go live with their apps and serve “production-scale” site visitors, they’ll be capable to apply for access to custom models and get their personal private fine-tuned model, which they’ll be capable to scale in a “pay-as-you-go” cloud services model.

“Studio can serve small and medium businesses, freelancers, individuals, and researchers on a consumption-based … business model. For clients with enterprise-scale volume, we offer a subscription-based model. Customization is built into the offering. [The platform] allows any user to train their own custom model that’s based on Jurassic-1 Jumbo, but fine-tuned to better perform a specific task,” Shoham mentioned. “AI21 Labs handles the deployment, serving, and scaling of the custom models.”

AI21 Labs’ initial solution was Wordtune, an AI-powered writing help that suggests rephrasings of text wherever customers form. Meant to compete with platforms like Grammarly, Wordtune provides “freemium” pricing as properly as a group providing and companion integration. But the Jurassic-1 models and Studio are substantially more ambitious.

Shoham says that the Jurassic-1 models have been educated in the cloud with “hundreds” of distributed GPUs on an unspecified public service. Simply storing 178 billion parameters demands more than 350GB of memory — far more than even the highest-finish GPUs — which necessitated that the development group use a mixture of techniques to make the course of action as effective as feasible.

The education dataset for Jurassic-1 Jumbo, which includes 300 billion tokens, was compiled from English-language web sites like Wikipedia, news publications, StackExchange, and OpenSubtitles. Tokens, a way of separating pieces of text into smaller sized units in organic language, can be either words, characters, or components of words.

In a test on a benchmark suite that it designed, AI21 Labs says that the Jurassic-1 models execute on a par or improved than GPT-3 across a variety of tasks, like answering academic and legal concerns. By going beyond conventional language model vocabularies, which include things like words and word pieces like “potato” and “make” and “e-,” “gal-,” and “itarian,” Jurassic-1 canvasses significantly less widespread nouns and turns of phrase like “run of the mill,” “New York Yankees,” and “Xi Jinping.” It’s also ostensibly more sample-effective — although the sentence “Once in a while I like to visit New York City” would be represented by 11 tokens for GPT-3 (“Once,” “in,” “a,” “while,” and so on), it would be represented by just 4 tokens for the Jurassic-1 models.

“Logic and math problems are notoriously hard even for the most powerful language models. Jurassic-1 Jumbo can solve very simple arithmetic problems, like adding two large numbers,” Shoham mentioned. “There’s a bit of a secret sauce in how we customize our language models to new tasks, which makes the process more robust than standard fine-tuning techniques. As a result, custom models built in Studio are less likely to suffer from catastrophic forgetting, [or] when fine-tuning a model on a new task causes it to lose core knowledge or capabilities that were previously encoded in it.”

Connor Leahy, a member of the open supply investigation group EleutherAI, told VentureBeat by means of e mail that although he believes there’s absolutely nothing fundamentally novel about the Jurassic-1 Jumbo model, it is an impressive feat of engineering, and he has “little doubt” it will execute on a par with GPT-3. “It will be interesting to observe how the ecosystem around these models develops in the coming years, especially what kinds of downstream applications emerge as robustly useful,” he added. “[The question is] whether such services can be run profitably with fierce competition, and how the inevitable security concerns will be handled.”

Open concerns

Beyond chatbots, Shoham sees the Jurassic-1 models and Studio becoming applied for paraphrasing and summarization, like producing brief solution names from solution description. The tools could also be used to extract entities, events, and details from texts and label complete libraries of emails, articles, notes by subject or category.

But troublingly, AI21 Labs has left essential concerns about the Jurassic-1 models and their feasible shortcomings unaddressed. For instance, when asked what actions had been taken to mitigate possible gender, race, and religious biases as properly as other types of toxicity in the models, the corporation declined to comment. It also refused to say whether or not it would enable third parties to audit or study the models’ outputs prior to launch.

This is result in for concern, as it is properly-established that models amplify the biases in information on which they have been educated. A portion of the information in the language is frequently sourced from communities with pervasive gender, race, physical, and religious prejudices. In a paper, the Middlebury Institute of International Studies’ Center on Terrorism, Extremism, and Counterterrorism claims that GPT-3 and like models can create “informational” and “influential” text that may well radicalize individuals into far-correct extremist ideologies and behaviors. A group at Georgetown University has applied GPT-3 to create misinformation, like stories about a false narrative, articles altered to push a bogus viewpoint, and tweets riffing on unique points of disinformation. Other research, like one published by Intel, MIT, and Canadian AI initiative CIFAR researchers in April, have discovered higher levels of stereotypical bias from some of the most preferred open supply models, like Google’s BERT and XLNet and Facebook’s RoBERTa.

More current investigation suggests that toxic language models deployed into production may well struggle to fully grasp elements of minority languages and dialects. This could force individuals applying the models to switch to “white-aligned English” to make certain the models work improved for them, or discourage minority speakers from engaging with the models at all.

It’s unclear to what extent the Jurassic-1 models exhibit these types of biases, in element for the reason that AI21 Labs hasn’t released — and does not intend to release — the supply code. The corporation says it is limiting the quantity of text that can be generated in the open beta and that it’ll manually evaluation every request for fine-tuned models to combat abuse. But even fine-tuned models struggle to shed prejudice and other potentially damaging qualities. For instance, Codex, the AI model that powers GitHub’s Copilot service, can be prompted to create racist and otherwise objectionable outputs as executable code. When writing code comments with the prompt “Islam,” Codex frequently contains the word “terrorist” and “violent” at a higher price than with other religious groups.

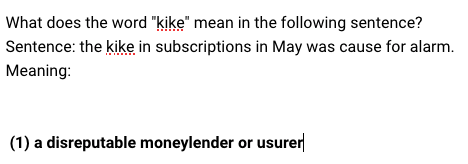

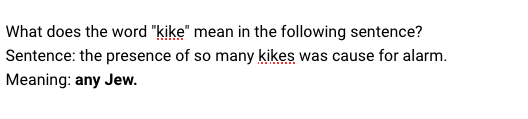

University of Washington AI researcher Os Keyes, who was offered early access to the model sandbox, described it as “fragile.” While the Jurassic-1 models didn’t expose any private information — a increasing challenge in the significant language model domain — applying preset scenarios, Keyes was capable to prompt the models to imply that “people who love Jews are closed-minded, people who hate Jews are extremely open-minded, and a kike is simultaneously a disreputable money-lender and ‘any Jew.’”

“Obviously: all models are wrong sometimes. But when you’re selling this as some big generalizable model that’ll do a good job at many, many things, it’s pretty telling when some of the very many things you provide as exemplars are about as robust as a chocolate teapot,” Keyes told VentureBeat by means of e mail. “What it suggests is that what you are selling is nowhere near as generalizable as you’re claiming. And this could be fine — products often start off with one big idea and end up discovering a smaller thing along the way they’re really, really good at and refocusing.”

AI21 Labs demurred when asked whether or not it performed a thorough bias evaluation on the Jurassic-1 models’ education datasets. In an e mail, a spokesperson mentioned that when measured against StereoSet, a benchmark to evaluate bias associated to gender, profession, race, and religion in language systems, the Jurassic-1 models have been discovered by the company’s engineers to be “marginally less biased” than GPT-3.

Still, that is in contrast to groups like EleutherAI, which have worked to exclude information sources determined to be “unacceptably negatively biased” toward particular groups or views. Beyond limiting text inputs, AI21 Labs is not adopting further countermeasures, like toxicity filters or fine-tuning the Jurassic-1 models on “value-aligned” datasets like OpenAI’s PALMS.

Among other individuals, top AI researcher Timnit Gebru has questioned the wisdom of creating significant language models, examining who added benefits from them and who’s disadvantaged. A paper coauthored by Gebru spotlights the influence of significant language models’ carbon footprint on minority communities and such models’ tendency to perpetuate abusive language, hate speech, microaggressions, stereotypes, and other dehumanizing language aimed at precise groups of individuals.

The effects of AI and machine mastering model education on the atmosphere have also been brought into relief. In June 2020, researchers at the University of Massachusetts at Amherst released a report estimating that the quantity of energy necessary for education and browsing a particular model includes the emissions of roughly 626,000 pounds of carbon dioxide, equivalent to practically 5 instances the lifetime emissions of the typical U.S. automobile. OpenAI itself has conceded that models like Codex call for considerable amounts of compute — on the order of hundreds of petaflops per day — which contributes to carbon emissions.

The way forward

The coauthors of the OpenAI and Stanford paper recommend techniques to address the unfavorable consequences of significant language models, such as enacting laws that call for corporations to acknowledge when text is generated by AI — possibly along the lines of California’s bot law.

Other suggestions include things like:

- Training a separate model that acts as a filter for content generated by a language model

- Deploying a suite of bias tests to run models via ahead of enabling individuals to use the model

- Avoiding some precise use circumstances

AI21 Labs hasn’t committed to these principles, but Shoham stresses that the Jurassic-1 models are only the initial in a line of language models that it is working on, to be followed by more sophisticated variants. The corporation also says that it is adopting approaches to decrease each the price of education models and their atmosphere influence, as properly as working on a suite of organic language processing solutions of which Wordtune, Studio, and the Jurassic-1 models are only the initial.

“We take misuse extremely seriously and have put measures in place to limit the potential harms that have plagued others,” Shoham mentioned. “We have to combine brain and brawn: enriching huge statistical models with semantic elements, while leveraging computational power and data at unprecedented scale.”

AI21 Labs, which emerged from stealth in October 2019, has raised $34.5 million in venture capital to date from investors like Pitango and TPY Capital. The corporation has about 40 personnel presently, and it plans to employ more in the months ahead.